WorldScape : A Unified Real-time World Model Integrating Locomotion And Manipulation

What's WorldScape

We release WorldScape today, a unified real-time world model integrating locomotion and manipulation.

The ultimate vision of the world model is to create an infinite and realistic "virtual laboratory" for AI. By simulating the evolution of the internal environment, agents can conduct unrestricted exploration, learning, and decision-making in the virtual world without the need for costly trial-and-error in the real world. With the rapid development of video generation models, the imagery of this "imagined world" has become clearer than ever before, and has also brought the long-conceived goal of scientists - to construct a world that is both realistic, interactive, and long-lasting - gradually within reach.

Existing interactive world models still generally suffer from structural shortcomings: most models can only gain advantages in a few dimensions, such as supporting real-time generation but lacking spatial consistency, or having strong geometric stability but struggling with online interaction; interaction forms are often limited to a single control mode, making it difficult to support both navigation and operation simultaneously; meanwhile, insufficient long-term memory capacity makes it difficult for the generated world to maintain consistency during long-term interaction. Overall, simultaneously balancing general interactivity, 3D consistency, real-time performance, and memory capacity in general scenarios remains an unsolved core challenge.

Key Features of WorldScape

WorldScape can simultaneously achieve leading performance across four core dimensions:

1. Comprehensive and leading interactive experience, rather than single interaction: WorldScape integrates spatial displacement and object interaction into the same generation process through a unified action-world state modeling framework, avoiding the inconsistency issues caused by multi-module splicing, thus simultaneously supporting spatial navigation and object manipulation.

2. A more stable and reliable 3D world structure: WorldScape explicitly introduces 3D geometric-aware spatial representation and constraints during the training process, enabling the generated results to maintain a consistent spatial structure in continuous interactions. This design effectively alleviates the common problems of geometric drift and structural collapse in long-term generation.

3. Maintaining high visual quality under real-time generation: In terms of efficiency, WorldScape does not simply rely on model compression or resolution reduction. Instead, through structured generation and efficient training strategies, it achieves near real-time (up to 24 FPS) interactive generation on a single GPU and ranks among the top in visual metrics such as imaging quality and motion smoothness, realizing interactive generation that combines both speed and quality.

4. A World with "Memory": Long-term consistency is the key to distinguishing between "video generation models" and "world models". WorldScape enables the model to share and update spatial information across different time steps through a geometrically-aware world state memory mechanism.

Technical Details of WorldScape

1.Spatial Consistency Training: Geometrically Constrained Generative Framework

As AI continues to evolve, important questions arise about privacy, security, and the ethical implications of increasingly autonomous systems. Addressing these challenges while fostering innovation will be crucial for the sustainable development of AI technologies.

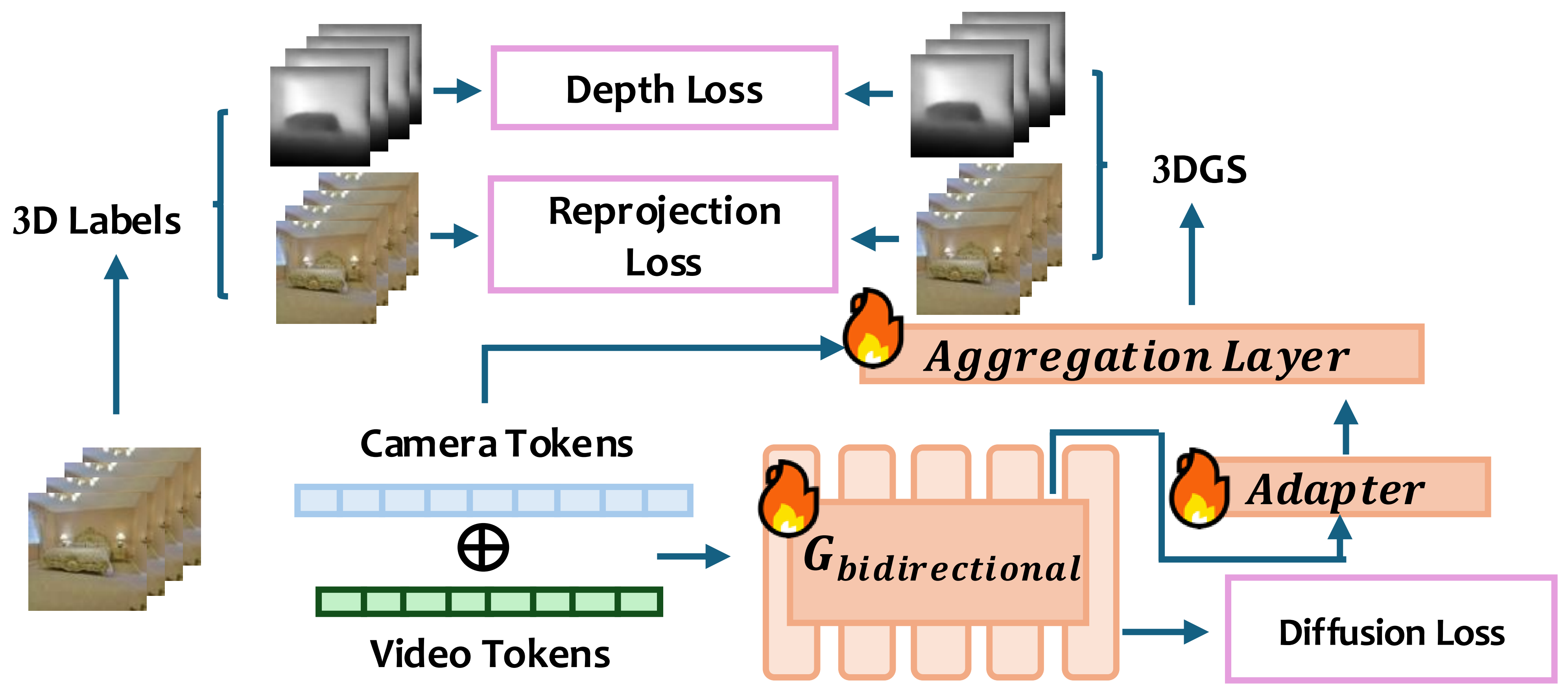

- Joint Optimization of Geometric Constraints:

The training process of WorldScape is simultaneously supervised by the complementary information of flow matching loss and 3D geometric signals (depth and 3D Gaussian splatting). By jointly optimizing the overall loss function, strong constraints can be imposed on the scene structure and spatial relationships of the generated content.

- End-to-end 3D reconstruction branch task

WorldScape feeds the latent variables generated by the DiT backbone through a lightweight adapter into the 3DGS aggregator for 3D scene reconstruction. By comparing the differences between the rendered depth map and RGB image with the ground truth values, the model is forced to adhere to rigorous spatial physical logic when generating each frame, thereby significantly reducing the distortion of the spatial topological structure.

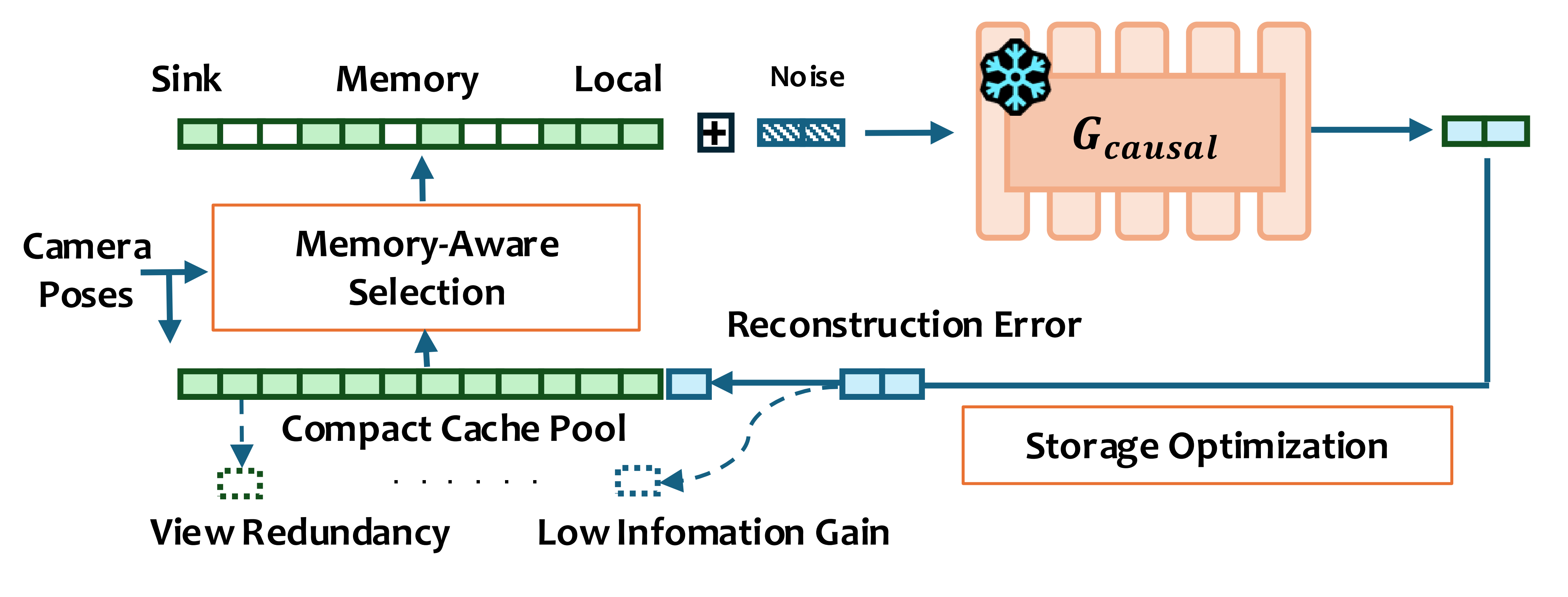

2. Memory-aware KV Cache Optimization: Efficient Long Sequence Consistency

To address the pain point of the difficulty in balancing GPU memory explosion and long-term consistency in long video generation, WorldScape proposes an optimization strategy based on KV cache. This strategy leverages camera trajectory priors and achieves sublinear GPU memory complexity through a three-level hierarchical architecture (permanent anchor points, global memory pool, and local sliding window).

- Memory Information Acquisition - Viewpoint-Oriented Cache Retrieval: By combining the camera extrinsic matrix, the model can obtain the position and orientation of current and historical information. Through the geometric similarity score S(i, j), it preferentially extracts the scene memories most relevant to the current viewpoint, ensuring that the model can still accurately "recall" what previously appeared objects looked like during mobile shooting.

- Reducing Computational Overhead - Gated Deduplication and Global Pruning: Inspired by the Minimum Description Length principle (MDL) based on information theory, WorldScape follows a greedy algorithm to evaluate the "surprise" of new information in real time, actively retaining visual features that are difficult to reconstruct from existing memories and eliminating redundant information. This enables it to expand the scene capacity within a limited video memory budget. When the memory pool is full, the farthest point sampling algorithm is used for approximate optimization. Based on translation and rotation, the most representative key viewpoints are dynamically selected to ensure that the global spatial layout remains highly consistent even after long-path movements.

- Finally, specify the size of the memory pool according to the actual situation, and then the minimum amount of anchor point historical information required for optimizing the reconstruction of the generated 3D space above the recovery lower limit frequency can be obtained

3. Universal Interaction Control: Locomotion and Manipulation

4. Distillation: Real-time Interaction on a Single GPU Card

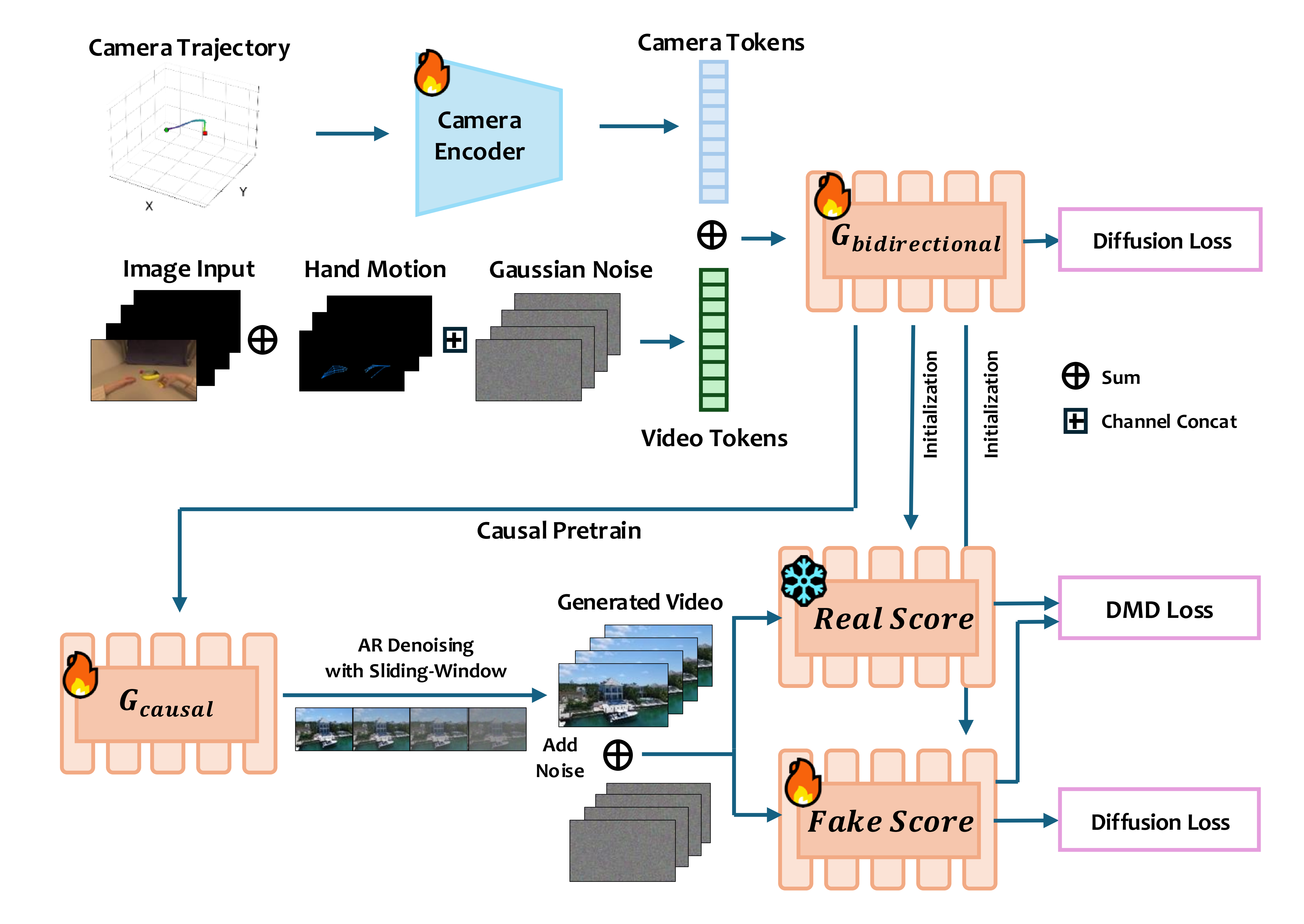

Diffusion models have high quality but are computationally expensive. If it takes several minutes to generate each video segment, real-time interaction would be impossible.

WorldScape adopts an asymmetric distillation architecture based on Self Forcing:

- First, train a complete, unified, interactive, and controllable bidirectional attention diffusion model;

- Then apply distribution matching distillation to distill it into a causal autoregressive diffusion model that generates on a per-video chunk basis;

- By autoregressive denoising based on sliding windows, the strict causality of Self Forcing is weakened, allowing different chunks to attend to each other during the denoising process, thereby making the camera motion at the junctions of different chunks smoother.

Performance

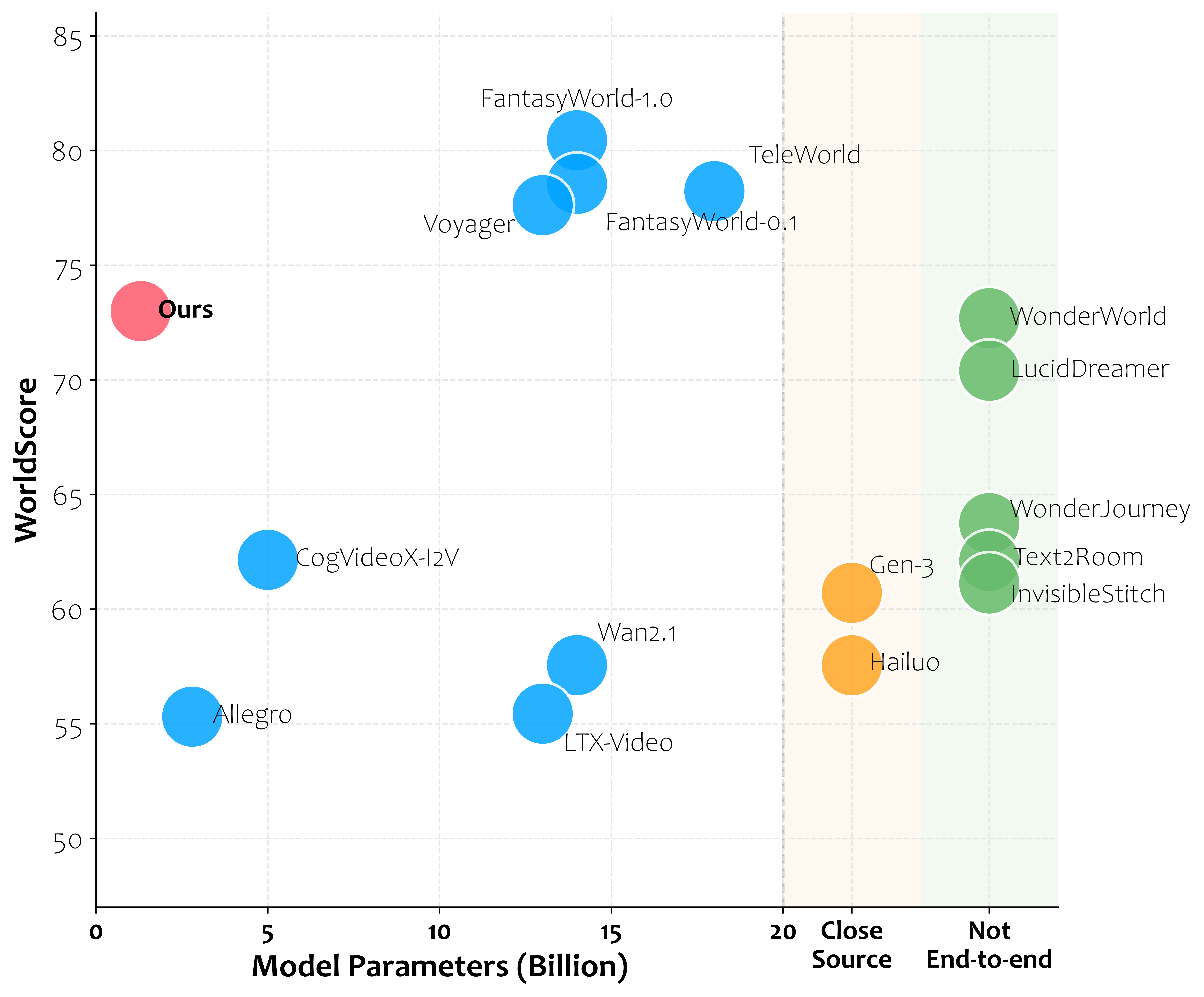

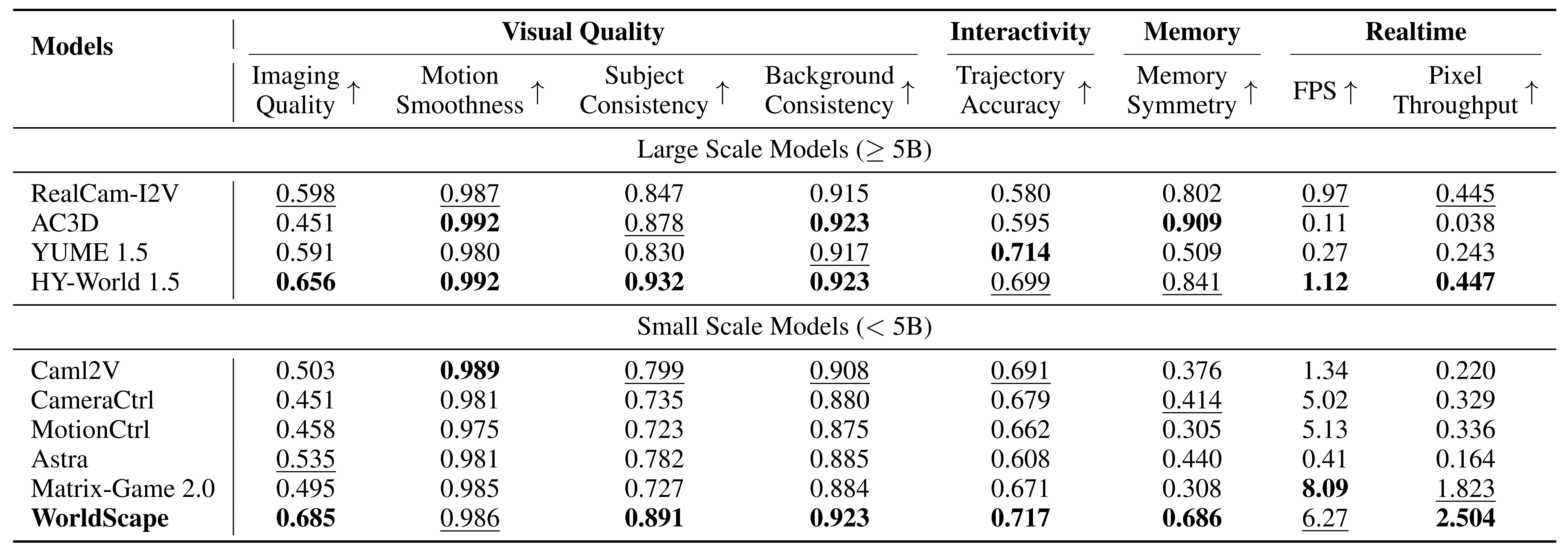

WorldScape's experimental design revolves around the four core capabilities of the "Interactive World Model":

- Visual Quality (Visual Quality)

- Interactivity (Accuracy of Interactive Response)

- Spatial & Long-Horizon Consistency (Spatial and Long-Horizon Consistency)

- Real-Time Capability (Real-Time Generation Capability)

WorldScape does not pursue optimization in a single metric but emphasizes the balance and collaborative optimization of multi-dimensional capabilities.

Experimental results show that WorldScape achieves balanced leadership across multiple key dimensions, including visual quality, interactive response, 3D spatial consistency, long-term memory capabilities, and single-card real-time pixel throughput rate for generation, emerging as a general world model that simultaneously reaches an advanced level in the four aspects of "high quality + interactive + memorable + real-time".

Demos and Cases

Conclusion

The WorldScape model breaks through the limitations of existing work in terms of generality, real-time performance, etc. Through a spatial consistency-enhanced autoregressive distillation framework, it is compatible with different types of action injection modules, ensuring high interaction efficiency while maintaining stable action following capabilities, and is expected to become the spatial intelligence foundation for supporting general embodied agents.